合 Greenplum 7 新特性整理

- 新特性总结

- 快速拥有GPDB 7和gpcc 7环境

- 重要的新特性

- 内核

- 自动启用vacuuum

- 支持仅索引扫描和覆盖索引

- 引入监控视图可以监控长运行的操作

- 新增pg_backend_memory_contexts的系统视图

- 增加列不再需要重写表

- 分区表

- 其它

- Example 1: Creating a Range Partitioned Table

- Example 2: Classic Range Partitioned Table

- Example 3: Creating a List Partitioned Table

- Example 4: Classic List Partitioned Table

- Example 5: Creating a Hash Partitioned Table

- Example 6: Adding Hash Partitions

- Example 7: Creating a Composite Partitioned Table

- Example 8: Adding Composite Partitions

- Example 9: Adding Default Partition

- Example 10: Splitting Default Partition

- Example 11: Detaching Partitions

- Example 12: Attaching Existing Table

- Example 13: Checking Partition Information

- Example 14: Getting Partition Statistics

- Example 15: Truncate a Partition

- Example 16: Exchange Partition Data

- Example 17: Applying Constraints on Partitions

- Example 18: Creating an indexed Partitioned Tables

- Example 19: Rename Partitioned Tables

- Example 20: Dropping Partitions

- 支持hash索引

- 支持生成列

- AO表特性

- AO表分析可以更快

- AO表支持唯一索引、唯一约束和主键

- 引入gppkg v2

- 数据库维护

- 性能

- 优化器 Greenplum Query Optimizer (GPORCA)

- AO表 Append-Optimized Tables

- GP7移除的功能

- GP7系统视图

- GP7调优参数

- GP7动态分区裁剪

- 用户或角色不允许以“pg_”开头

- 参考

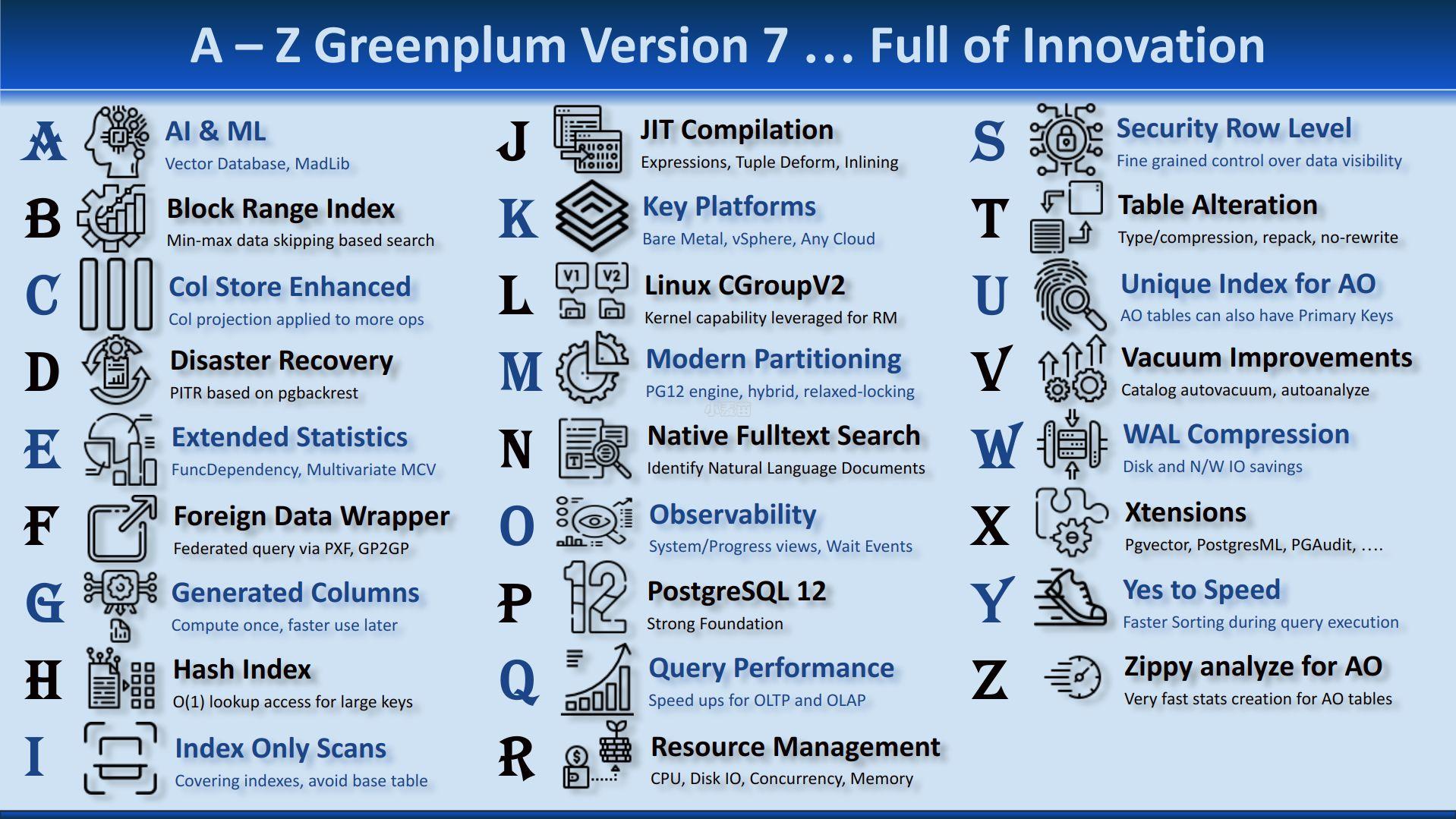

新特性总结

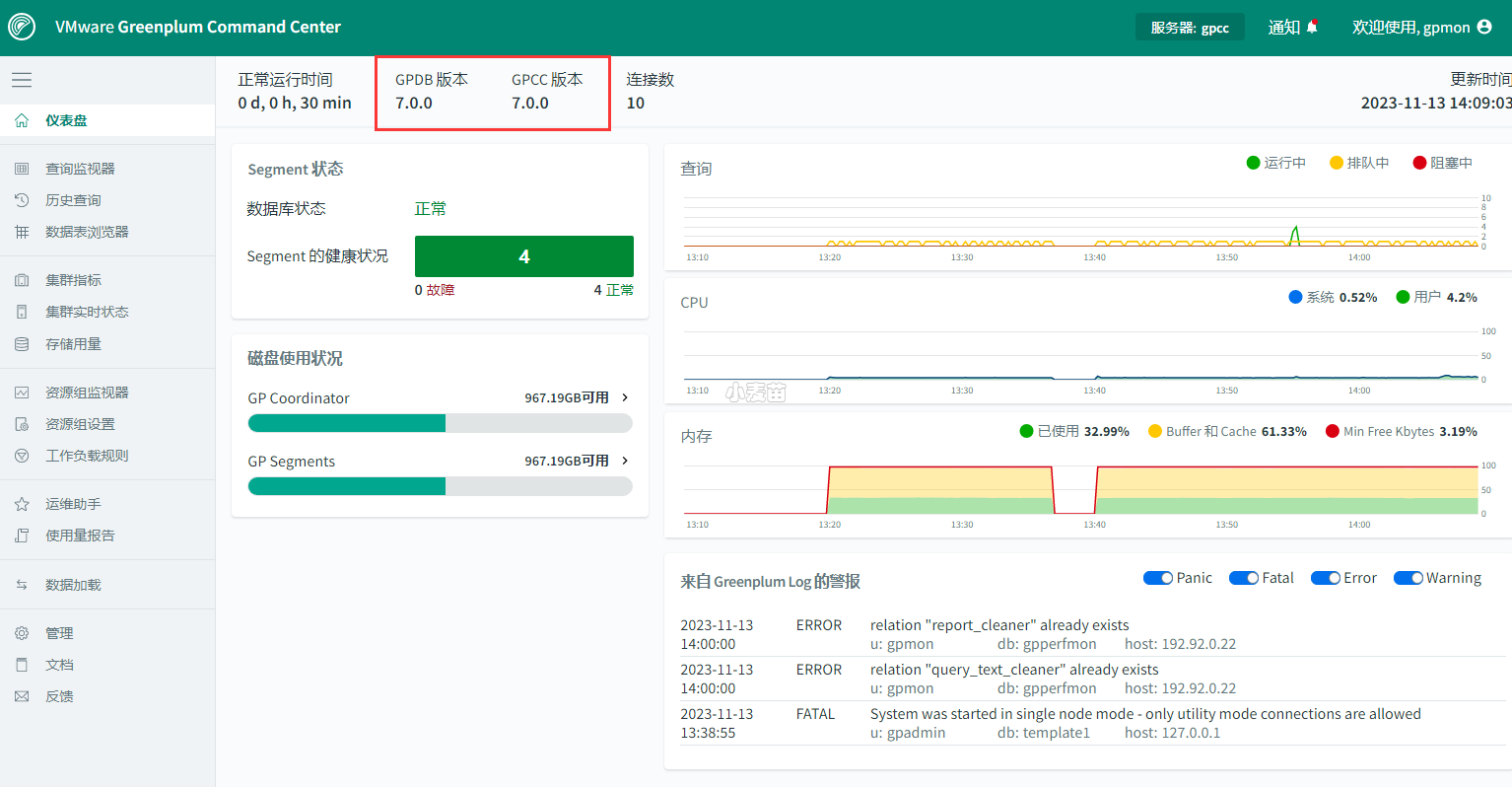

快速拥有GPDB 7和gpcc 7环境

参考:https://www.xmmup.com/zaidockerzhongkuaisutiyangreenplum-7-0-0.html

此docker包括1个master,1个standby master,2个segment,2个mirror实例;还包括gpcc 7.0.0

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 | docker rm -f gpdb7 docker run -itd --name gpdb7 -h gpdb7 \ -p 5437:5432 -p 28087:28080 \ -v /sys/fs/cgroup:/sys/fs/cgroup \ --privileged=true lhrbest/greenplum:7.0.0_v2 \ /usr/sbin/init docker exec -it gpdb7 bash su - gpadmin gpstart -a gpcc start gpcc status gpstate [gpadmin@gpdb7 ~]$ psql psql (12.12) Type "help" for help. postgres=# select version(); version ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- PostgreSQL 12.12 (Greenplum Database 7.0.0 build commit:0a7a3566873325aca1789ae6f818c80f17a9402d Open Source) on x86_64-pc-linux-gnu, compiled by gcc (GCC) 8.5.0 20210514 (Red Hat 8.5.0-18), 64-bit compiled on Sep 20 2023 23:29:19 Bhuvnesh C. (1 row) postgres=# select * from gp_segment_configuration ; dbid | content | role | preferred_role | mode | status | port | hostname | address | datadir ------+---------+------+----------------+------+--------+------+----------+---------+-------------------------------------------- 1 | -1 | p | p | n | u | 5432 | gpdb7 | gpdb7 | /opt/greenplum/data/master/gpseg-1 6 | -1 | m | m | s | u | 5433 | gpdb7 | gpdb7 | /opt/greenplum/data/master_standby/gpseg-1 2 | 0 | p | p | n | u | 6000 | gpdb7 | gpdb7 | /opt/greenplum/data/primary/gpseg0 4 | 0 | m | m | n | d | 7000 | gpdb7 | gpdb7 | /opt/greenplum/data/mirror/gpseg0 3 | 1 | p | p | n | u | 6001 | gpdb7 | gpdb7 | /opt/greenplum/data/primary/gpseg1 5 | 1 | m | m | n | d | 7001 | gpdb7 | gpdb7 | /opt/greenplum/data/mirror/gpseg1 (6 rows) |

gpmon/lhr

重要的新特性

内核

Greenplum 7的内核从9.4.26升级到了12.12,其性能、功能均领先于Greenplum 6

自动启用vacuuum

缺省打开了autovacuum,会自动对系统表进行VACUUM和ANALYZE操作,并对用户表进行ANALYZE操作。

INSERT,UPDATE和DELETE影响的数据量大于下面的计算结果时,会自动对操作的表进行ANALYZE操作:

1 2 3 4 | autovacuum_analyze_scale_factor × reltuples + autovacuum_analyze_threshold -- 默认 0.1 * 行数 + 50 |

Automatic Vacuum is now enabled by default for all databases, which automatically performs VACUUM and ANALYZE operations against all catalog tables, as well as runs ANALYZE for all users tables in those databases.

- autovacuum

- autovacuum_analyze_scale_factor

- autovacuum_analyze_threshold

- autovacuum_freeze_max_age

- autovacuum_max_workers

- autovacuum_multixact_freeze_max_age

- autovacuum_naptime

- autovacuum_vacuum_cost_delay

- autovacuum_vacuum_cost_limit

- autovacuum_vacuum_scale_factor

- autovacuum_vacuum_threshold

- gp_autovacuum_scope

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 | [gpadmin@gpdb7 ~]$ gpconfig -s autovacuum Values on all segments are consistent GUC : autovacuum Coordinator value: on Segment value: on [gpadmin@gpdb7 ~]$ gpconfig -s autovacuum_analyze_scale_factor Values on all segments are consistent GUC : autovacuum_analyze_scale_factor Coordinator value: 0.1 Segment value: 0.1 [gpadmin@gpdb7 ~]$ [gpadmin@gpdb7 ~]$ gpconfig -s autovacuum_analyze_threshold Values on all segments are consistent GUC : autovacuum_analyze_threshold Coordinator value: 50 Segment value: 50 [gpadmin@gpdb7 ~]$ [gpadmin@gpdb7 ~]$ gpconfig -s autovacuum_max_workers Values on all segments are consistent GUC : autovacuum_max_workers Coordinator value: 3 Segment value: 3 [gpadmin@gpdb7 ~]$ gpconfig -s gp_autovacuum_scope Values on all segments are consistent GUC : gp_autovacuum_scope Coordinator value: catalog Segment value: catalog [gpadmin@gpdb7 ~]$ [gpadmin@gpdb7 ~]$ gpconfig -s autovacuum_naptime Values on all segments are consistent GUC : autovacuum_naptime Coordinator value: 1min Segment value: 1min [gpadmin@gpdb7 ~]$ [gpadmin@gpdb7 ~]$ ps -ef|grep cuum gpadmin 1252 1236 0 12:17 ? 00:00:00 postgres: 6000, autovacuum launcher gpadmin 1259 1241 0 12:17 ? 00:00:00 postgres: 6001, autovacuum launcher gpadmin 1279 1273 0 12:17 ? 00:00:00 postgres: 5432, autovacuum launcher gpadmin 2903 461 0 12:41 pts/1 00:00:00 grep --color=auto cuum [gpadmin@gpdb7 ~]$ gpconfig -c gp_autostats_mode -v on_change gpconfig -c gp_autostats_on_change_threshold -v 100000 |

示例:

1 2 3 4 5 6 7 8 | create table t8 as select * from pg_class limit 5; create table t9 as select * from pg_class limit 50; select * from pg_stat_all_tables where schemaname='public' and relname = 't9'; SELECT * from pg_stats d where d.tablename='t9'; |

支持仅索引扫描和覆盖索引

增加了对覆盖索引(covering index)的支持。覆盖索引允许用户使用INCLUDE 子句将额外的列添加到索引中,对于执行索引 (index-only)扫描非常有用,特别是对于那些不能被B-tree所索引的数据类型。 Greenplum 查询优化器部分支持索引扫描和覆盖索引。

注意:不能使用GitHub上的开源版本,否则不能使用仅索引扫描和覆盖索引!!!

Index-only scans can answer queries from an index alone without accessing the table’s heap, which significantly improves query performance. In addition, covering indexes allow you to add additional columns to an index using the INCLUDE clause, in order to make the use of index-only scans more effective. See Understanding Index-Only Scans and Covering Indexes for more details.

支持Index only的索引扫描,并支持CREATE INDEX的INCLUDE子句,允许在索引查询时不再回表,从而提升索引查询的性能。

测试环境:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 | drop table t1; drop table t2; drop table t3; create table t1 (id int, c1 int, c2 int, c3 int, info text, crt_time timestamp); create table t2(like t1); create table t3(like t1); create index idx_t1_1 on t1 (id) include(c1,c2,c3,info,crt_time); create index idx_t2_1 on t2 (id,c1,c2,c3,info,crt_time); create index idx_t3_1 on t3(id); \timing on insert into t1 select (1000*random())::int,1,1,1,'test',now() from generate_series(1,10000000); insert into t2 select (1000*random())::int,1,1,1,'test',now() from generate_series(1,10000000); insert into t3 select (1000*random())::int,1,1,1,'test',now() from generate_series(1,10000000); explain (analyze,verbose,timing,costs,buffers) select id,c1,c2,c3,info,crt_time from t1 where id=10000; explain (analyze,verbose,timing,costs,buffers) select id,c1,c2,c3,info,crt_time from t2 where id=10000; explain (analyze,verbose,timing,costs,buffers) select id,c1,c2,c3,info,crt_time from t3 where id=10000; analyze t1; analyze t2; analyze t3; select id,c1,c2,c3,info,crt_time from t1 where id=1000; select id,c1,c2,c3,info,crt_time from t2 where id=1000; select id,c1,c2,c3,info,crt_time from t3 where id=1000; create table t66(id int PRIMARY key, c1 text, crt_time timestamp); insert into t66 SELECT id, md5(id::text),now() FROM generate_series(1, 2000000) AS id; create index idx_t66_1 on t66 (id) include(c1); explain select id,c1 from t66 where id =100; |

视频:https://www.youtube.com/watch?v=J7_xLoq3E20&t=1383s

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 | db2=# explain select id,c1,c2,c3,info,crt_time from t1 where id=1000; QUERY PLAN -------------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..9.82 rows=9883 width=29) -> Index Scan using idx_t1_1 on t1 (cost=0.00..8.26 rows=4942 width=29) Index Cond: (id = 1000) Optimizer: GPORCA (4 rows) Time: 2.374 ms db2=# explain select id,c1,c2,c3,info,crt_time from t2 where id=1000; QUERY PLAN ---------------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..14.31 rows=10933 width=29) -> Index Scan using idx_t2_1 on t2 (cost=0.00..12.59 rows=5467 width=29) Index Cond: (id = 1000) Optimizer: GPORCA (4 rows) Time: 3.194 ms db2=# explain select id,c1,c2,c3,info,crt_time from t3 where id=1000; QUERY PLAN -------------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..9.40 rows=9775 width=29) -> Index Scan using idx_t3_1 on t3 (cost=0.00..7.86 rows=4888 width=29) Index Cond: (id = 1000) Optimizer: GPORCA (4 rows) Time: 2.755 ms db2=# explain (analyze,verbose,timing,costs,buffers) select id,c1,c2,c3,info,crt_time from t1 where id=10000; QUERY PLAN --------------------------------------------------------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..6.00 rows=1 width=29) (actual time=1.067..1.068 rows=0 loops=1) Output: id, c1, c2, c3, info, crt_time -> Index Scan using idx_t1_1 on public.t1 (cost=0.00..6.00 rows=1 width=29) (actual time=0.000..0.778 rows=0 loops=1) Output: id, c1, c2, c3, info, crt_time Index Cond: (t1.id = 10000) Optimizer: GPORCA Planning Time: 1.878 ms (slice0) Executor memory: 18K bytes. (slice1) Executor memory: 42K bytes (seg1). Memory used: 128000kB Execution Time: 1.372 ms (11 rows) Time: 3.683 ms db2=# explain (analyze,verbose,timing,costs,buffers) select id,c1,c2,c3,info,crt_time from t2 where id=10000; QUERY PLAN --------------------------------------------------------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..6.00 rows=1 width=29) (actual time=0.308..0.308 rows=0 loops=1) Output: id, c1, c2, c3, info, crt_time -> Index Scan using idx_t2_1 on public.t2 (cost=0.00..6.00 rows=1 width=29) (actual time=0.000..0.082 rows=0 loops=1) Output: id, c1, c2, c3, info, crt_time Index Cond: (t2.id = 10000) Optimizer: GPORCA Planning Time: 1.940 ms (slice0) Executor memory: 18K bytes. (slice1) Executor memory: 42K bytes (seg1). Memory used: 128000kB Execution Time: 0.550 ms (11 rows) Time: 2.890 ms db2=# explain (analyze,verbose,timing,costs,buffers) select id,c1,c2,c3,info,crt_time from t3 where id=10000; QUERY PLAN --------------------------------------------------------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..6.00 rows=1 width=29) (actual time=0.329..0.330 rows=0 loops=1) Output: id, c1, c2, c3, info, crt_time -> Index Scan using idx_t3_1 on public.t3 (cost=0.00..6.00 rows=1 width=29) (actual time=0.000..0.066 rows=0 loops=1) Output: id, c1, c2, c3, info, crt_time Index Cond: (t3.id = 10000) Optimizer: GPORCA Planning Time: 1.971 ms (slice0) Executor memory: 18K bytes. (slice1) Executor memory: 42K bytes (seg1). Memory used: 128000kB Execution Time: 0.626 ms (11 rows) Time: 3.070 ms db2=# db2=# explain select id,c1 from t66 where id =100; QUERY PLAN ----------------------------------------------------------------------------- Gather Motion 1:1 (slice1; segments: 1) (cost=0.00..6.00 rows=1 width=37) -> Index Scan using t66_pkey on t66 (cost=0.00..6.00 rows=1 width=37) Index Cond: (id = 100) Optimizer: GPORCA (4 rows) Time: 3.489 ms |

引入监控视图可以监控长运行的操作

引入了pg_stat_progress_vacuum/copy/create_index等进度视图。

Greenplum Database can report the progress of ANALYZE, CLUSTER, COPY, CREATE INDEX, REINDEX, and VACUUM commands during command execution. Greenplum can also report the progress of a running base backup (initiated during gprecoverseg -F) command invocation, allowing you to monitor the progress of these possibly long-running operations.

- gp_stat_progress_analyze

- gp_stat_progress_basebackup

- gp_stat_progress_cluster

- gp_stat_progress_copy

- gp_stat_progress_create_index

- gp_stat_progress_vacuum

1 2 3 4 5 6 | select * from gp_stat_progress_analyze; select * from gp_stat_progress_basebackup; select * from gp_stat_progress_cluster; select * from gp_stat_progress_copy ; select * from gp_stat_progress_create_index; select * from gp_stat_progress_vacuum ; |

参考:

https://greenplum.org/progress-reporting-views-in-greenplum-7/

https://greenplum.org/partition-in-greenplum-7-recursion-and-inheritance/

新增pg_backend_memory_contexts的系统视图

新增了一个名为pg_backend_memory_contexts的系统视图,可以查看后台的内存使用情况。

1 | SELECT * from pg_backend_memory_contexts d ORDER BY d.total_bytes desc ; |

增加列不再需要重写表

Greenplum Database no longer rewrites the table when a column is added to a table (ALTER TABLE ... ADD COLUMN ...).